Building AI tools to visualize risk and streamline corporate processes

Role

Sr. Product Designer

Timeline

12 weeks

Platforms

Web

Overview

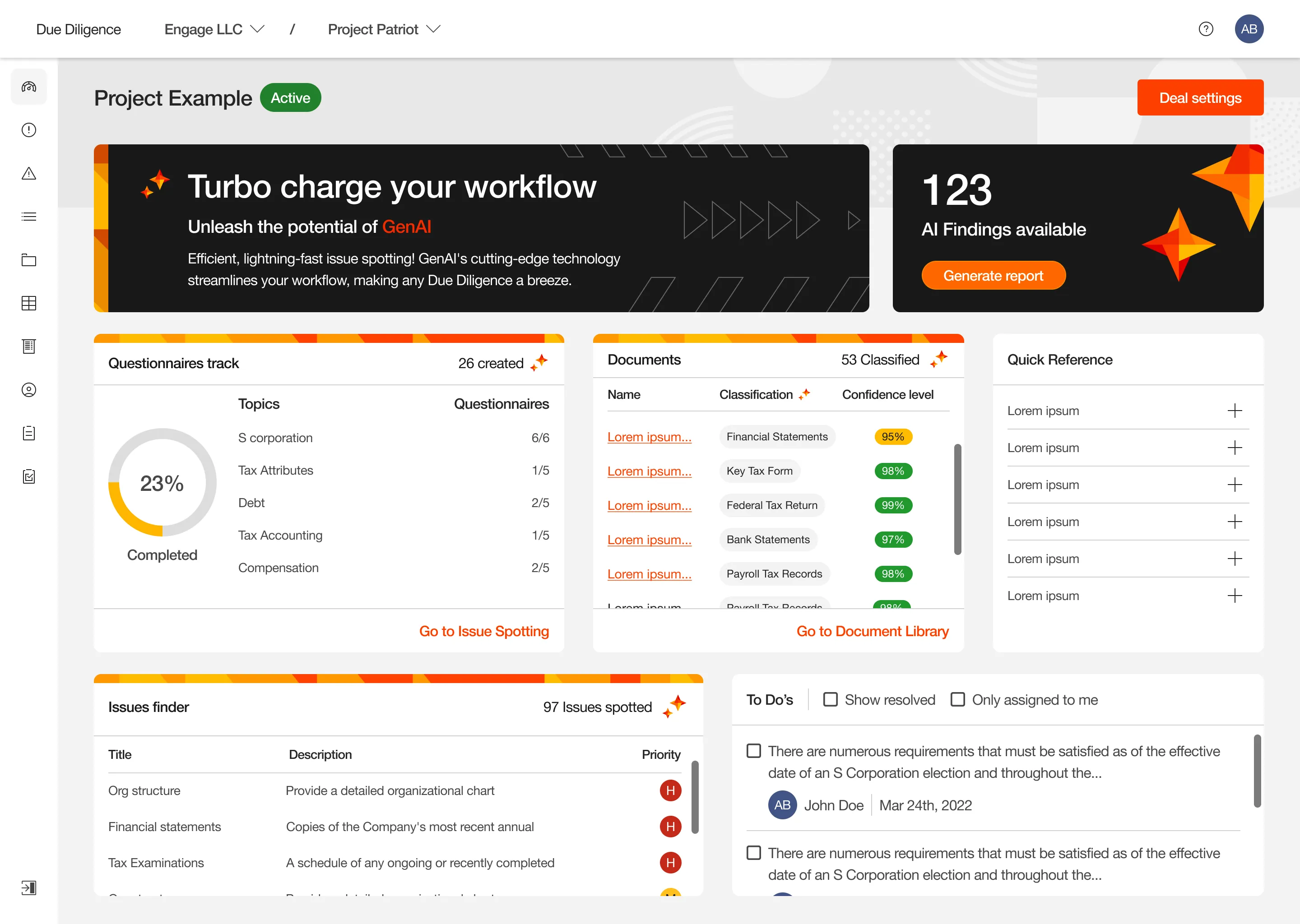

Systematizing Intelligence

Key Results

+31%

Of early adoption by flattening the learning curve and building trust in the system's predictions.

2.3x

Streamlined due diligence processes, significantly reducing execution time for auditing teams.

99%

Ensuring technical and visual consistency of AI-driven components across global brand guidelines.

+6

Design, testing, and integration of micro-frontends into shared libraries to power scalable internal workflows.

The Situation

Optimizing demanding workflows

1. Collection

Requesting and uploading hundreds of documents for each audited company.

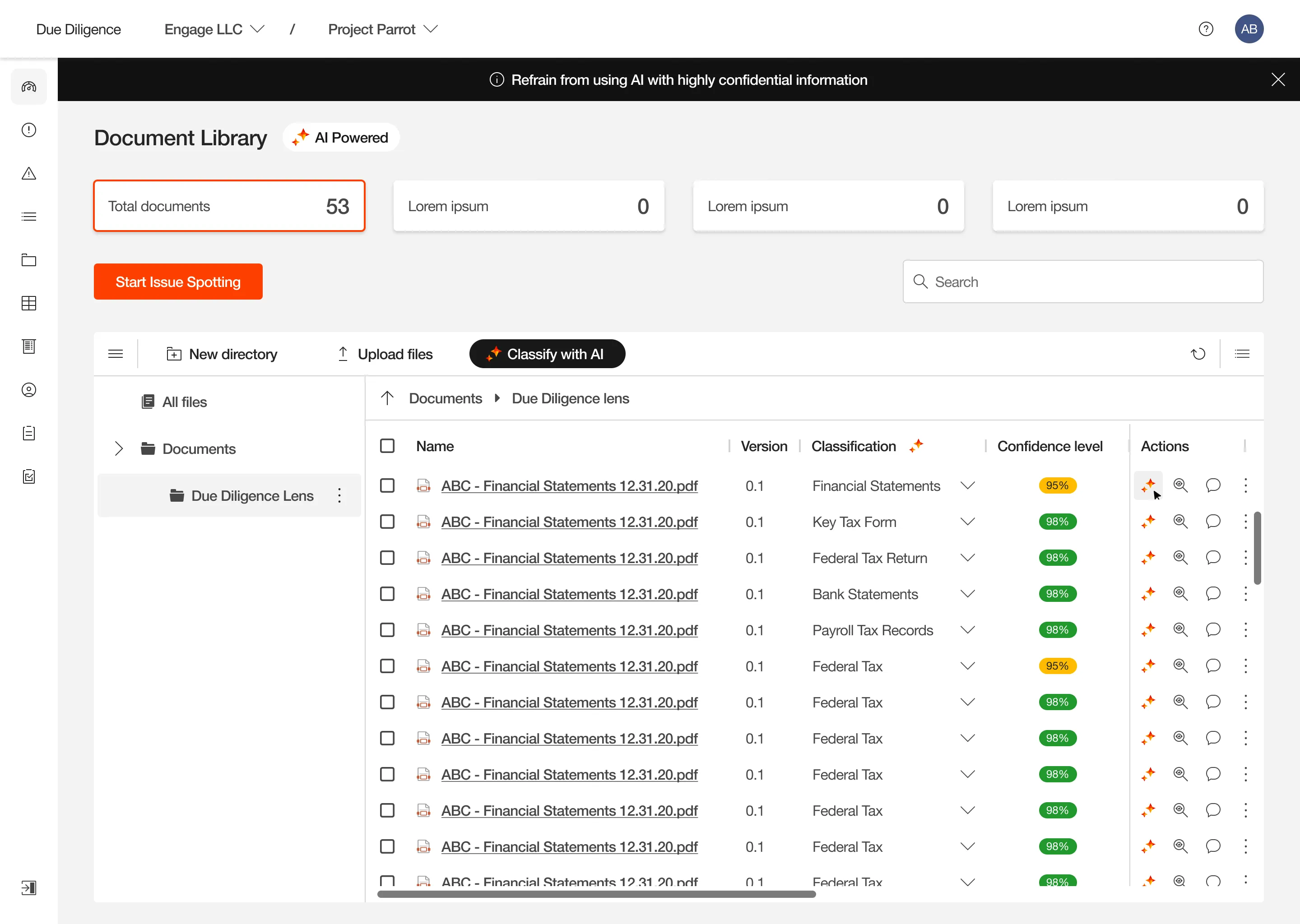

2. Classification

Manually naming and categorizing the massive volume of files on the platform.

3. Analysis

Intensive, line-by-line reading to extract information and answer questionnaires.

4. Diagnosis

Identifying legal anomalies and transferring those findings into a risk matrix.

5. Reporting

Consolidating detected issues to generate the final executive document.

Discovery

The Human Factor

The "Black Box" Fear

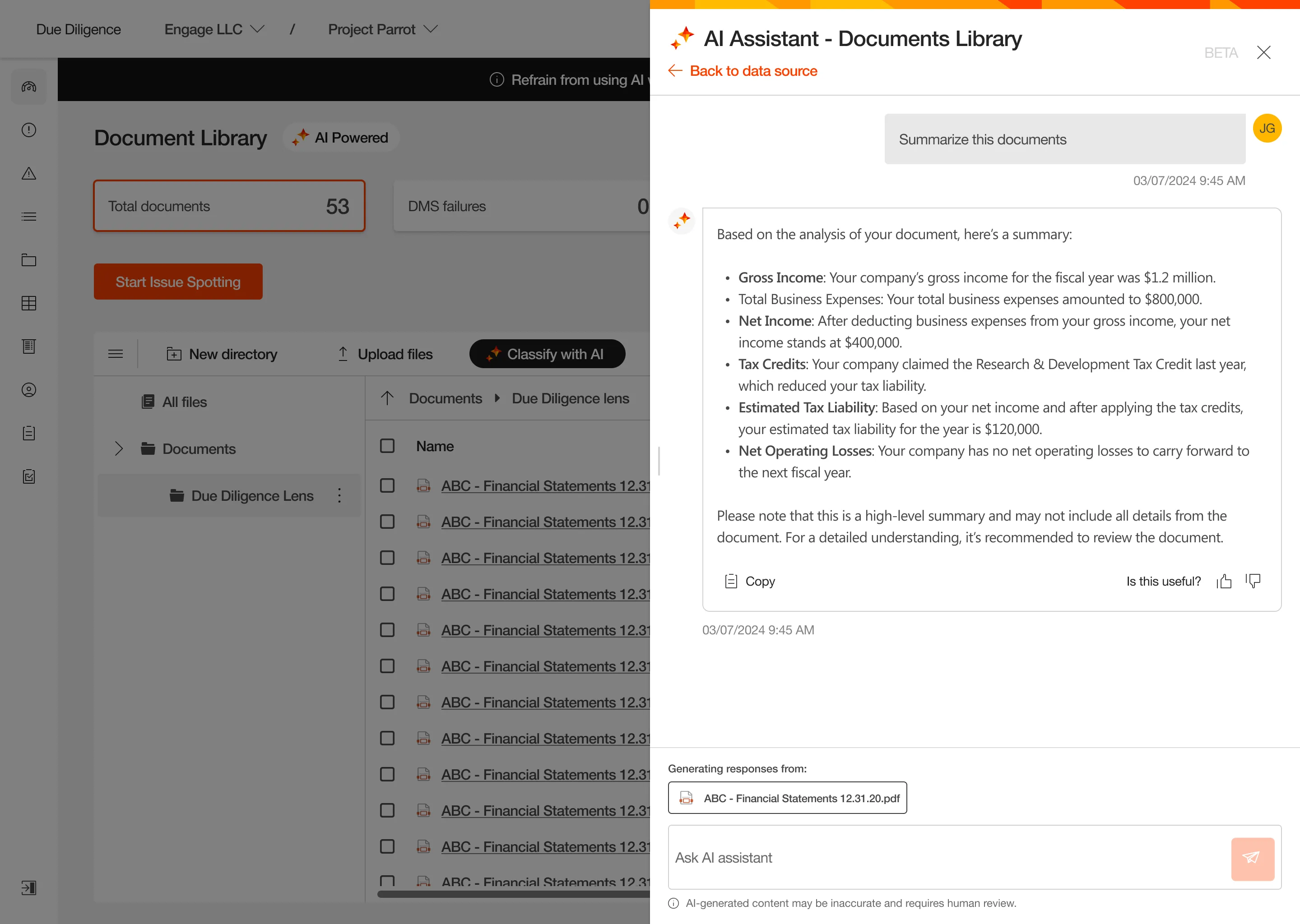

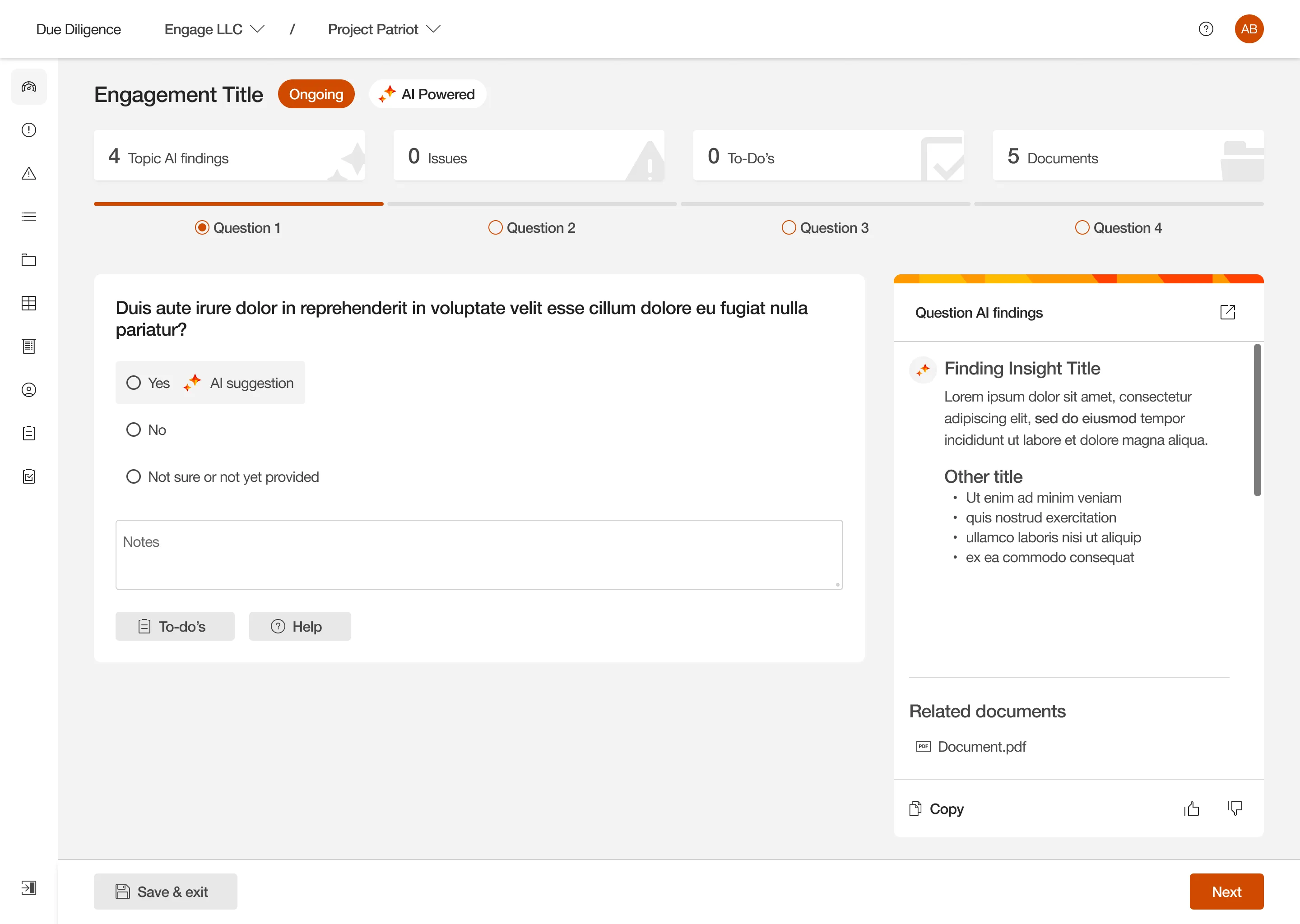

Approx. 40% distrusted AI’s judgment or feared being replaced. To validate a conclusion, users required full visibility into the machine’s reasoning.

Traceability: The Foundation of Trust

Adoption relied on evidence. Every prediction or summary had to include exact citations and direct links to the original document; without a source, there is no validation.

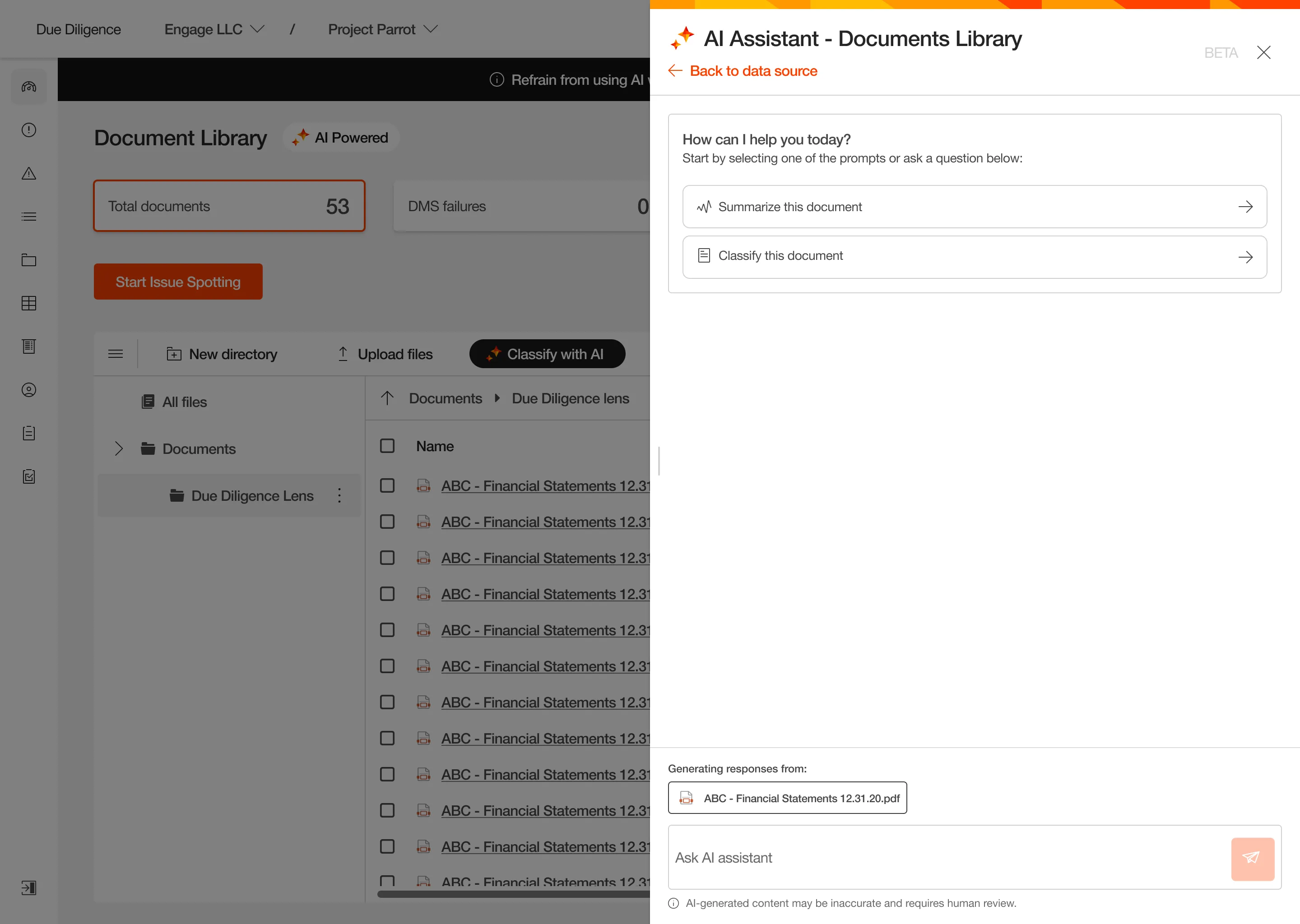

Context Switching Fatigue

Auditors rejected external chats or new tabs. Assistance had to occur natively within their workspace to avoid fracturing their reading flow.

Discovery

Analyzing the tools

We discovered that an isolated conversational module doesn't eliminate the operational burden. Auditors didn't need to answer questions somewhere else, but rather an AI that automated processes directly within their workflows.

Define

How Might We...

...integrate AI into processes so it acts as a reliable copilot without disrupting current workflows? To solve this, I facilitated a workshop with Business, Product, and Tech leaders, where we defined the three strategic pillars that would shape this new ecosystem:

Transparency

The system must guarantee traceability and full visibility for every piece of information.

Trust

The design must protect expert autonomy; the AI proposes, the auditor decides.

Scalability

A modular extension of current tools, built to scale without friction.

Ideation

Assembling the lego

Working alongside a group of auditors, we defined critical activities that, by nature, always required human review. This allowed us to isolate time-intensive tasks where AI could truly streamline the workload.

Ideation

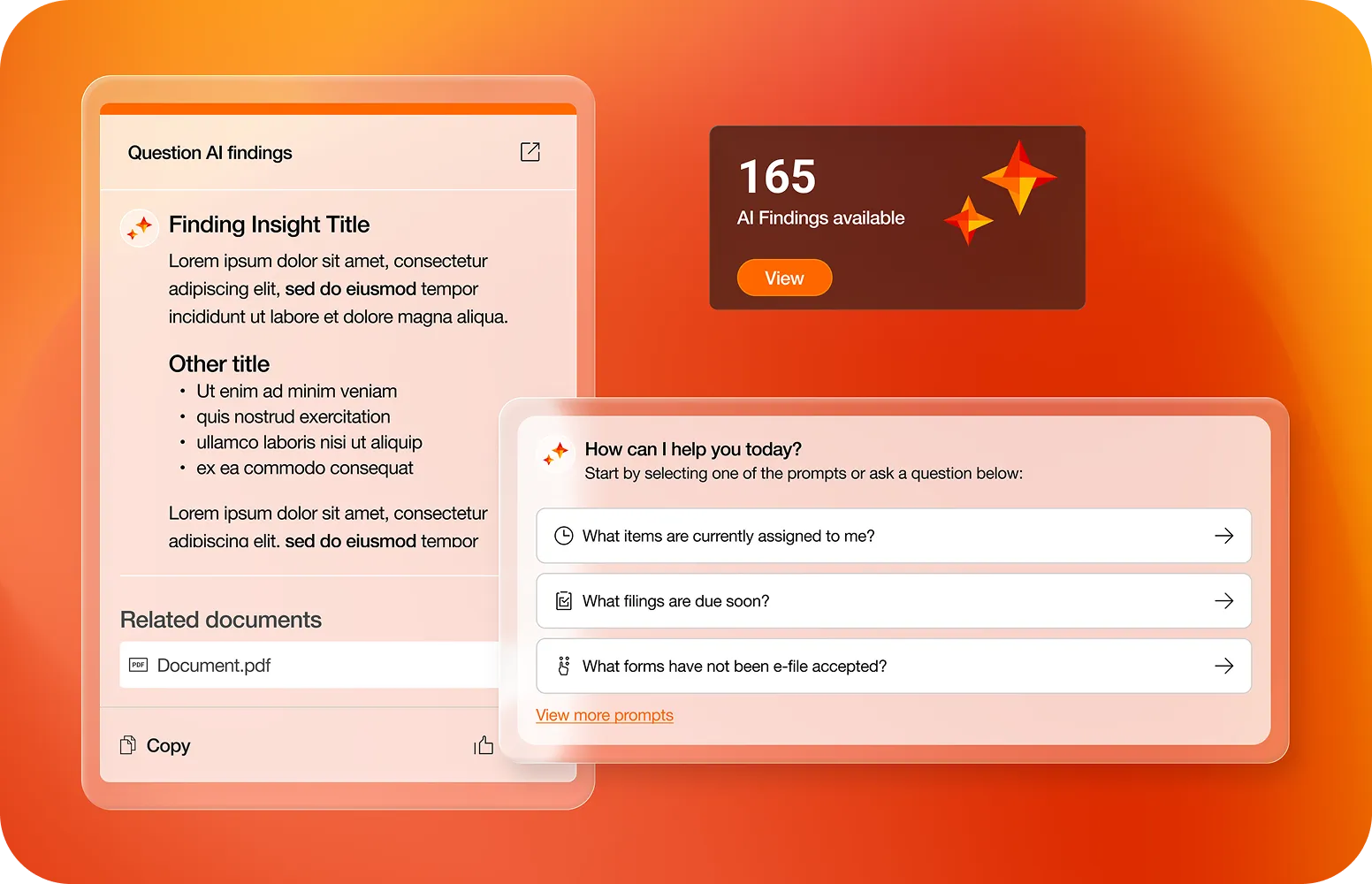

The AI Visual Standard

To accelerate adoption, I designed an exclusive visual identity for AI components with a dual purpose:

For the Auditor

Visually differentiating AI suggestions from native data to shorten the learning curve and prevent confusion during analysis.

For PwC (Scalability)

Establishing a design standard so other teams can integrate AI into their own projects, ensuring a consistent experience globally.

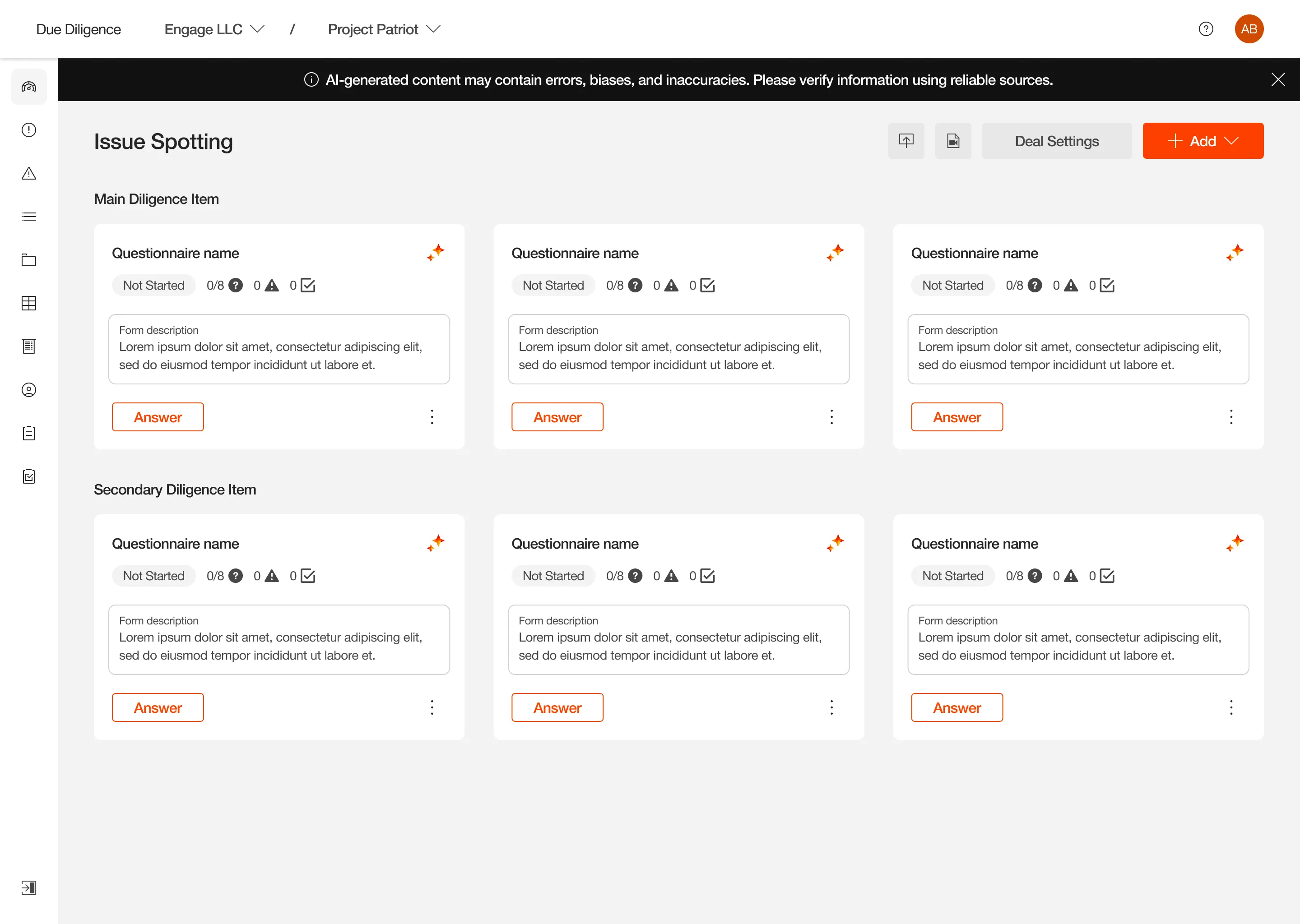

UI Design

Component System

I architected a modular component ecosystem that enables auditors to interact with AI at any point in their workflow, while ensuring full traceability at all times.

Delivery

Functional Implementation

We converted the modules into configurable micro-frontends. This approach enabled engineering to implement AI natively within the workflow, eliminating friction and allowing for scalable adoption.

Key Takeaways

Design as Governance

In global-scale organizations, design isn't just interface; it's policy. Creating a visual standard for AI unified technical and aesthetic criteria, facilitating seamless adoption across multiple teams within the firm.

Transparency is the new UX

In high-risk environments like Due Diligence, aesthetics are secondary to traceability. I learned that to overcome technological resistance, design must prioritize evidence and data provenance over total automation.

Systems over Screens

Solving technical fragmentation required shifting from isolated views to designing a modular ecosystem. The product's success lay not in "what the AI did," but in "how it was natively injected" into the pre-existing workflow.

Thanks for sticking around!

If you enjoyed the process or want to dive deeper into any part, let’s chat.